[ All work on this page is a collaboration with Dr Rusty Lansford from

Childern's Hospital Los Angeles (CHLA) and the USC Keck School of Medicine ]

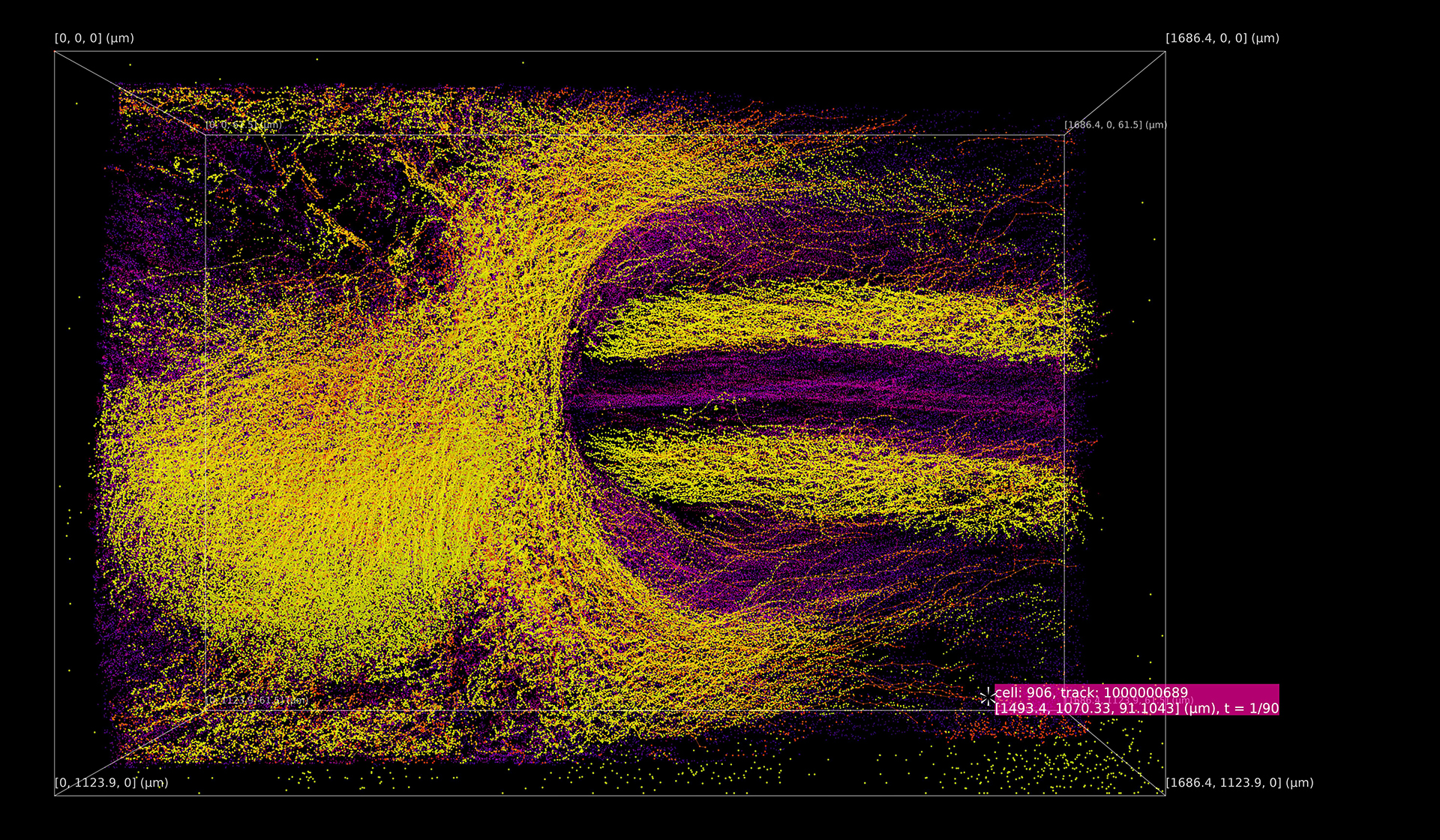

There exists a critical need for new tools and techniques to visualize, filter, interpret, and share high-dimensional image datasets. New sensors allow scientists to collect such massive amounts of information from events such as cell migration in cardiogenesis that it can be difficult to perceive and understand all the hidden patterns and narratives using traditional visualization strategies. John Carpenter (Oblong and USC School of Cinematic Arts MA+P) and Dr. Rusty Lansford (USC Keck School of Medicine) demonstrate that immersive visualizations and spatial navigation can play an essential role in understanding complex multidimensional datasets.

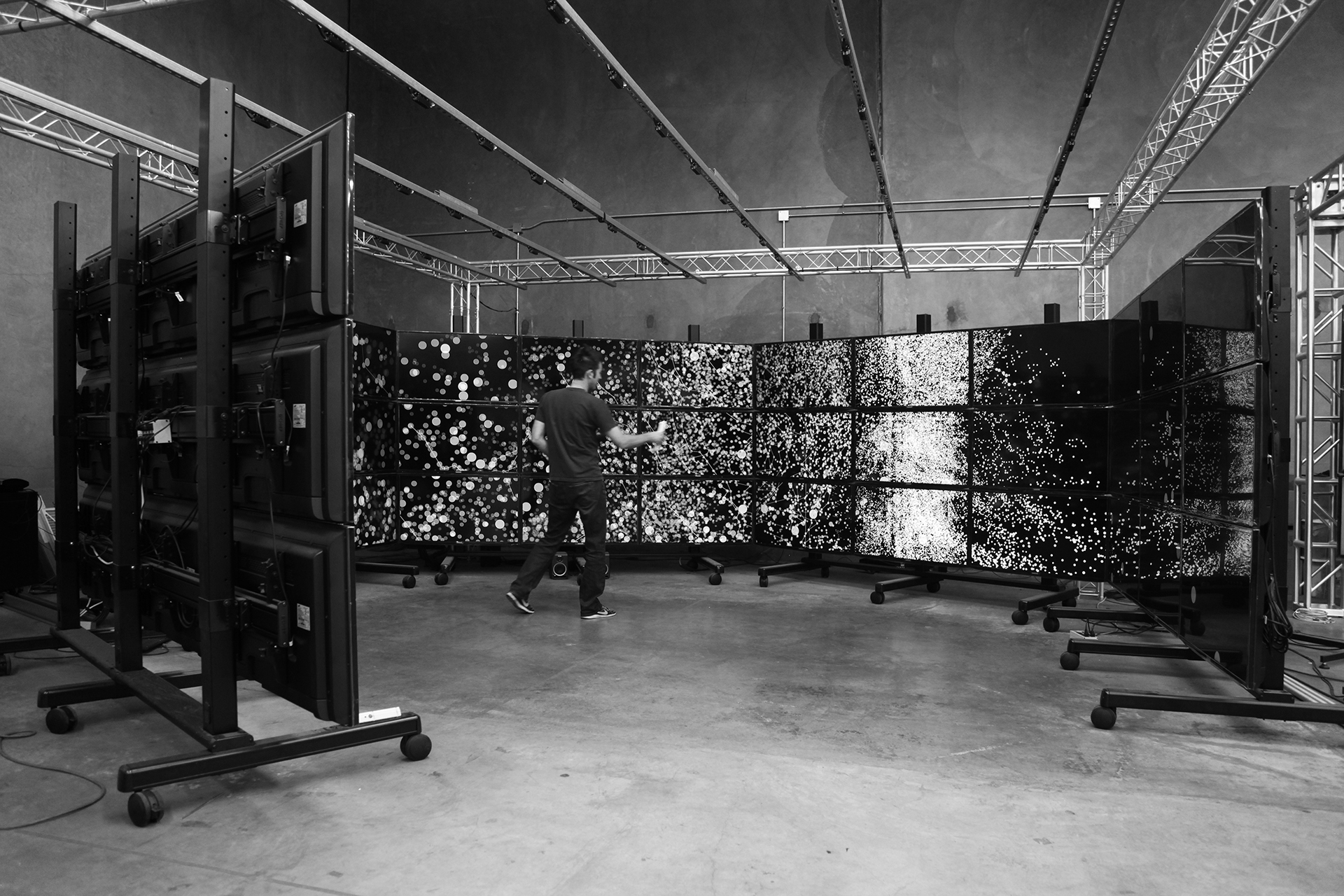

Using g-speak, Rusty and John designed a 320° immersive workspace that permits gesture-based navigation of volumetric image sets from early heart formation. The application runs in real-time on five computers over 45 screens (93,312,000 pixels), and the ultrasonic emitters and wand provide precise spatial interactions with the system. The raw data is multispectral 4D (xyzt) confocal microscopy image sets (11,088 images: 126 images every 7.5 minutes for 11 hours) that underwent quantitative analysis to generate a multi-dimensional data set for 460.6K points. The goal of the work was to enable the visualization of individual cell movements en masse while maintaining the integrity of the data and providing an intuitive new way to navigate and explore early cardiogenesis.

Gesture -- which by its nature is spatial -- is an ideal mode for interacting with multidimensional datasets because it provides a seamless, intuitive way to connect with and navigate the datascape. Placing the data in a room-sized (human-scaled) pixel space provides new opportunities for visualization and allows for exploration of the data from multiple perspectives at different spatial and temporal resolutions. The social nature of the space, and the ability to have multiple users driving the interactions with the system creates opportunities for new forms of scientific collaboration and the communication of research.

John Carpenter and Dr. Rusty Lansford were recently awarded a grant from the USC Bridge Art + Science Alliance (BASA) to document their work. This video was directed by Lily Darragh Harty and produced by Kyle McClary in collaboration with Oblong, USC, and CHLA.

More info: Oblong blog post + Medium Article